Welcome to Science with Shrike! Having covered basic entry requirements for graduate studies, I’m going to move forward to discussing the academic publishing enterprise over the next several posts: the good, the bad, the ugly, the centralization trends and the decentralization trends. Today I will give a broad overview of article types, publishing expectations and finding articles. Next week will be understanding how credit is assigned for papers and how to judge journals. After that, we will have the Open Access scam/Plan S(hame), and cover peer review. After we get through all of that, we’ll have the foundation to talk about decentralizing science, and the existential threat journals/publishers face.

First up is describing the game board and the pieces involved in this key area of academia. Publishing is one of the two key components of academia (grants being the other). “Publish or perish” is a very accurate description of survival in academia. However, different people count publications differently, and some count more than others. Publishing is also a very lucrative business, so there are lots of scam journals out there. Let’s break down scientific publishing by its various components:

Types of publications

Publications come in many different shapes and forms. At good universities, there are really only two types that matter: Primary research and everything else. Primary research articles are those papers presenting a new finding or conclusion, based on the data presented in the paper. Journals occasionally have short formats for really exciting ideas without much data, as well as more traditional ‘article formats’. Producing primary research articles is the mainstay of academic research.

There are also a lot of other non-primary research article types. The most common one is a review article. This type of article synthesizes the extant literature on a subject into a coherent narrative, and ideally provides some critical evaluation of the literature, and/or show the new horizons/outstanding questions in the field. If you’re new to a field, reviews are a great help for getting up to speed. The other large non-research article type is a methods paper. These articles focus on how to perform a method, along with typical results. One journal (Journal of Visualized Experiments) even does videos of the methods. It turns out somewhat like a cooking show, but it is helpful to see some of the techniques.

From Cell's website. Research, resource, theory, matters arising, preview and review are all possible article types.

Next, there are a variety of other invited article types, like ‘commentaries’, ‘news and views’, ‘previews’, ‘opinion’, ‘editorials’, etc. Many of these very short articles highlight recent advances, often in the journal itself, while others offer hot takes on the state of biomedical science. The number of these kinds of articles seems to correlate pretty well with how self-important the journal thinks it is. Nature has a whole ton of these, whereas Journal of Biological Chemistry or Journal of Immunology only have a few highlights and/or editorials.

The last two broad article types of note are ‘correspondence’/’letter to the editor’, (“matters arising” for Cell) and corrections/retractions. The correspondence is usually user-submitted, where you claim (usually including data) that an article published by the journal has a critical flaw or is otherwise wrong. The journal usually invites the original authors to reply to your letter, and may publish both. Corrections and retractions are issued by the journal when there is either some error in the manuscript (anything from a mislabeled figure to ‘we accidentally duplicated a panel’) that can be fixed, or when there is a fundamental error that invalidates the study. Note that being wrong usually does not warrant a retraction; there either needs to be a huge, glaring error that somehow got through peer review, some sort of ethical error, or an author realizes that there was a serious mistake in the manuscript that would not be apparent to peer review. For example, if you as a PI published a paper about the importance of potassium in a system, and then find out that your student was measuring potassium completely wrong, and the conclusions in the article are based on entirely artifactual measurements, ethically you should retract the paper. Ethical misconduct would be things like fabricating data, stealing others’ data, etc.

Out of all of these article types, you really only need to worry about primary research articles, reviews, methods papers and corrections/retractions. Corrections and retractions are top concern (and usually linked on the article’s entry), primary research is second, and the reviews/methods are third (depending on whether you need to learn about a topic, or how to do something).

Hot takes and opinions can be helpful if you want to see what others are thinking, or have enough time to read one, but not enough time to think about the actual paper. They’re basically the scientific version of social media before social media existed. The switch to online formats cost them some attention because most of the younger generations do not browse a given journal anymore. Instead, they just search for individual articles. Social media is still in its infancy for science because many of the most influential scientists are a mix of Boomers who barely understand Powerpoint, and people too focused on solving a scientific problem to devote time to professional social media.

Productivity

How many publications should one be producing in academia? A very rough guideline for primary research productivity is 1-2/year for postdocs and early career faculty, 2+/year for faculty and 1-2 every 4 years for a graduate student. However, that guideline is very very rough, and there are lots of exceptions to it. Complicating the matter is the fact that not all primary research publications are created equally. Ultimately, productivity (# of pubs/year) matters in 2 areas: promotion/tenure and getting grants. In both cases, it will depend on either the reviewer or the individual committee/department/institution. You should be able to get an idea of what your local committee is looking for, and plan accordingly. At top places, they may want one or more Tier 1 publications (journal tiers discussed next week; Tier 1 = best, Tier 4 = lowest worth considering). At lower ranked places, they might include middle author PLoS ONE papers (a Tier 4 journal) and/or reviews as ‘good’. For postdocs, at least one Tier 1 paper is ideal (along with grant funding), but the more papers in good journals, the better. Some departments require grad students to publish prior to graduating, others do not.

This need to produce leads the researchers to two conundrums: how to balance steady publication output (keep the # of publications high) with the quality of the publications. In general, there might be 25 figures in a Nature paper (5 in the main paper and 20 in the supplement), whereas there might be 2-3 figures in the Journal of Nobody Cares (if that; Shrike has seen a single table as the ‘results’ section in low ranked journals). On one hand, this is why some journals are objectively better than others. On the other hand, this leaves opportunities for people to try to maximize their ROI on publications by publishing just the bare minimum to qualify as a paper. Why publish 7 figures in a Tier 4 journal when you can publish 2 papers with 3-4 figures each? Double your publication count without generating more data! Crafting a paper with the barest minimum of data is referred to as a “Minimum Publishable Unit”. It’s usually derogatory because it implies someone is just trying to game the system rather than contribute knowledge. The Tier 4 journals certainly love this because you pay to publish each article. The downside is that if you publish lots of weak papers, you get a reputation in your field for doing ‘minimum publishable units’. That may negatively affect you politically. Additionally, if a reviewer for a Tier 1-3 journal thinks you’re trying to just do the bare minimum, they usually get crankier and more inclined to reject your paper.

Finding Articles

Some argue that in an ideal world, it is the quality of your research that should stand out to the reader and be valued, independent of your pre-existing scientific stature, place it was published, or the subject matter. The reality is that there is an information overload, so we need a way to sift useful information from worthless and scam information.

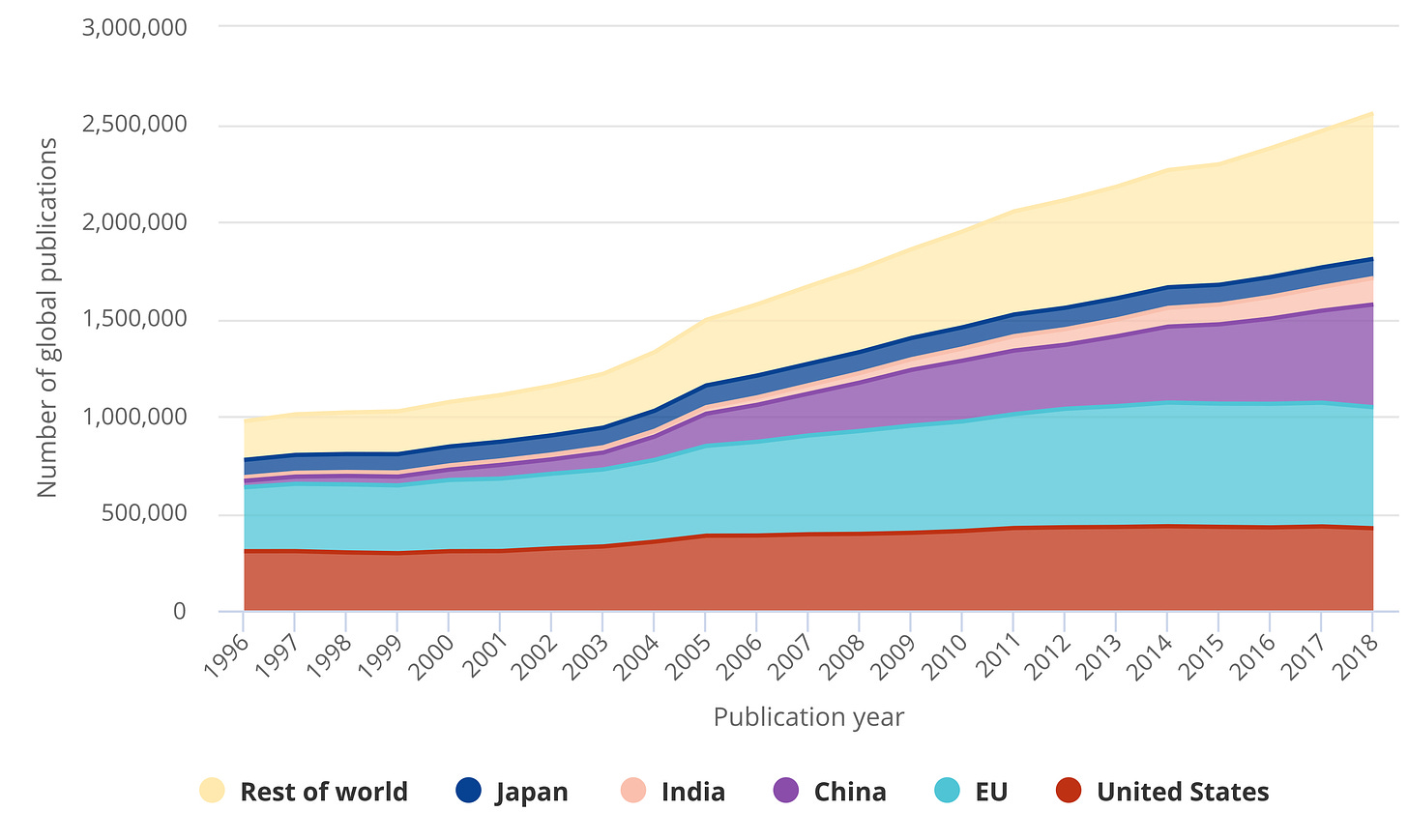

From the 2020 NSF report on science/engineering publications by country.

The least biased way to sort articles is via keyword search. The primary search tool in biomedical science is Pubmed (LINK or just type pubmed.gov into the address bar). Pubmed pulls from the Medline database of journal articles and provides the citation information, abstract and usually links to the article. If you are used to Duckduckgo or another modern search engine, you’ll find that Pubmed is a little archaic. It’s better at handling typos than it used to be, but it’s still fairly particular about search strings. However, you can automate it to send you email alerts when new hits to a query come up. Also, it will search all fields for your query, so you can do things like find out who is publishing on a given research topic in a particular city, or just look for reviews. The former is helpful if you are looking for a collaborator to do something (eg a search for ‘zebrafish omaha’ will give you people in Omaha publishing on zebrafish). One caveat is that the person with the expertise could be a co-author from somewhere else, and it obviously doesn’t pick up those who have not yet published. However, it is usually faster than browsing university websites. A search for ‘covid review’ gives you mostly reviews on the subject (in this case COVID19). If you get 24,000+ results, you will need some other way to filter. Pubmed gives you filters you can apply, and is starting to institute ‘best match’ instead of just ‘most recent’. You can narrow by journal. Note that not all journals are indexed in Pubmed. However, for biomedical science, it is usually the very new and/or scam journals that are left out. When you only keyword search, you miss all the articles not related to your keyword, so you may not find out about the really big things happening in science.

While you can search by author on Pubmed, missing initials can be challenging (if you search for Smith JE any articles they publish as Smith J will be missed), as are common names (search for ‘Wang’ and see what you get). Scopus (LINK) is more useful for looking individual authors up, but it is subscription-based. Scopus will also give you author metrics (h-index, total # of citations, publications and citations per publication) and links to articles. It has some trouble following name changes, though, so if you change your name, you may have to contact them. Searching by author is helpful for keeping up with the competition, leaders in the field, or people you know. It’s biased based on author since it gives you only their articles.

Another way to sort articles is by journal. If you looked at ‘covid review’, you saw that many are in no-name journals (which I alternatively call either the Journal of Nobody Cares or the Journal Nobody’s Heard Of). You’ll notice a little bit of bias in the previous comments. Filtering by journal is necessary to rule out the scams and low tier journals, but can be taken to an excess when only the very top journals are considered. However, it is important to note that more eyes end up on the best journals. You can filter by journal either by browsing their table of contents (which you can get sent to you via email), following them on Twitter (email table of contents is still superior) or by limiting the search on Pubmed. You will miss the references that don’t make the journals you read, but you will get a more comprehensive view of what is happening in biomedical science, and a better sense of what is currently hot.

One final way to sort articles is via reference mining. Too lazy to dig information up on Pubmed? Start with a review or that most recent paper you are trying to duplicate/learn about. Pull the reference citations and then read those to get more information on a given subject. The introduction in those research papers can be used as additional sources to pursue more references for background. There are two caveats here. Be careful if you engage in the even lazier ‘these people cited this article for this statement, so I will too without reading the original paper’ when writing a paper. Just because someone cited a paper, doesn’t mean they got it right. A review cited one of Shrike’s papers for something that was not shown in the paper, and now Shrike’s paper is widely credited for that finding.

The other caveat is blindness to other opinions/published findings. Most labs have a dominant narrative and cite people/groups who support that narrative. They may miss contrary findings, smaller journal articles, or other viewpoints if they’re only ever mined the references used by previous members of the lab group. To use that same paper of Shrike’s as an example, a competing group extended the findings in Shrike’s paper, and then published in a better journal five years later without citing Shrike’s paper. As the competing people sold it, it seemed novel and exciting, because it left out the fact that 80% of the paper was published 5 years ago in Shrike’s paper. Sloppy/selective reading of the literature led them to miss Shrike’s paper. While vindictiveness can also be a factor (what is the likelihood Shrike ever cites that group’s papers in the future?), it’s usually not worth the time and trouble figuring out which it is. If you really want to confront people over it, you contact them and/or the journal and ask that they cite your paper or issue a correction. I don’t give this a high odds of success (unless you have a lot of political power), though the odds of making one or more enemies is high. Many PIs don’t bother checking references on their own papers carefully, so whichever references the grad student or postdoc uses will be the ones in the paper. There are certain exceptions (PIs tend to check for high impact journals and catch key findings usually), but it’s surprisingly easy to make enemies just by being a clueless grad student and missing key literature. This is a pitfall at peer review, too. Moral of the story is to be very careful giving credit and to have a wide command of the literature.

When screening articles, you’ll quickly see that the title and the abstract are the two most important pieces of information. The title needs to be the one line summary of the paper, and it needs to convey information to the busy reader, and excite other readers into opening the abstract. However, clickbait is frowned upon (eg “Salmonella absolutely HATES this defense pathway”, “You won’t believe this SARS-Cov2 immune evasion trick!”, or “Five hacks your immune system uses to defeat viruses”). If the title is interesting, people may read your abstract. Hopefully that has your key points because most people don’t read the full paper (hopefully they do before citing, but as you already know, scientists can be lazy, too!).

That’s a lot to digest for this week. Next week, we’ll move on to criteria/credit for authorship and how to judge journals and academics.